Total Training Rows

269K

across 5 parquets

T2I Data

224.7K

50% of training (64 GPUs)

IE Data

41.0K

40% of training (32 GPUs)

MultiRef Data

4,017

10% of training (16 GPUs)

Training GPUs

128

16 nodes x 8 H200

THD:Non-THD

80:20

per-task ratio

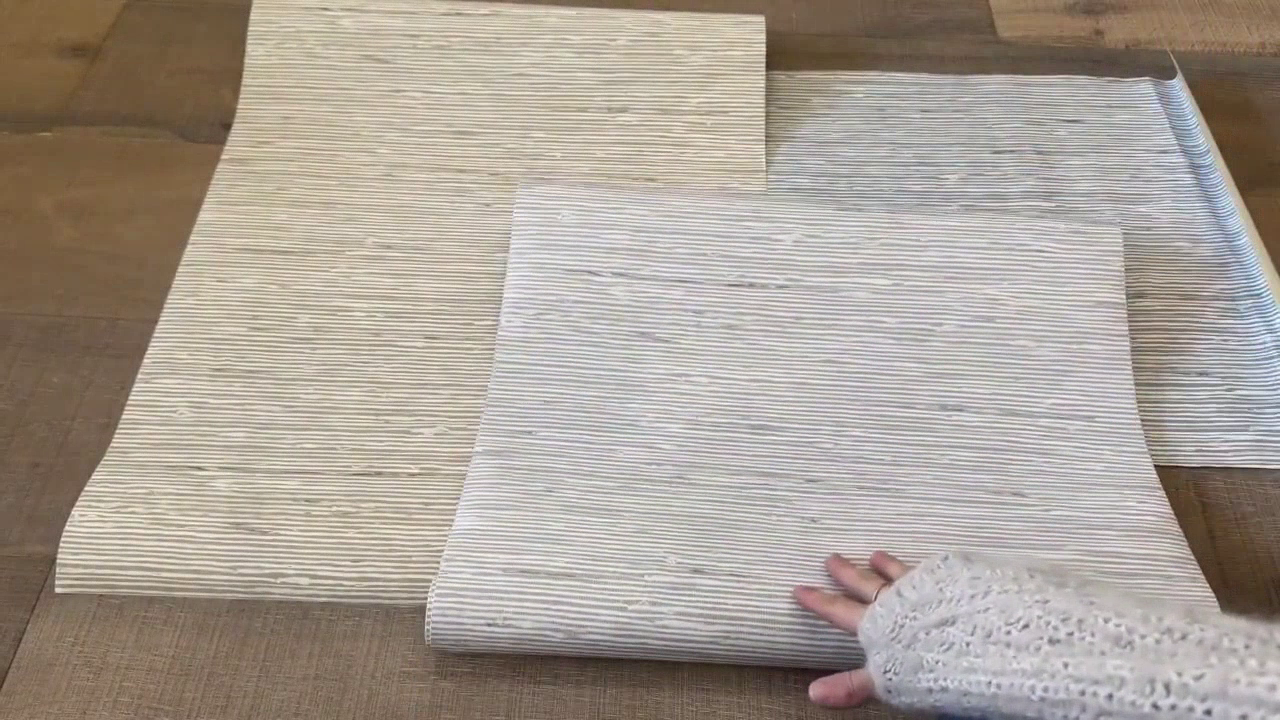

Text-to-Image (T2I) 224,707 images

HD video frames + stock photos, SCAP v2 captions, 50% GPU allocation

View Details ➔

Sampled from 40,530 Home Depot frames | Scenes: wallpaper, pavers, furniture, lawn care, measurement, DIY

Image Editing (IE) 40,964 pairs

Before/After frame pairs with edit instructions, 40% GPU allocation

View Details ➔

➔

EDIT:

➔

EDIT:

➔

EDIT:

Sources: Brightcove 49% | Missions 30% | YouTube 20% | Color Corrected 0.5%

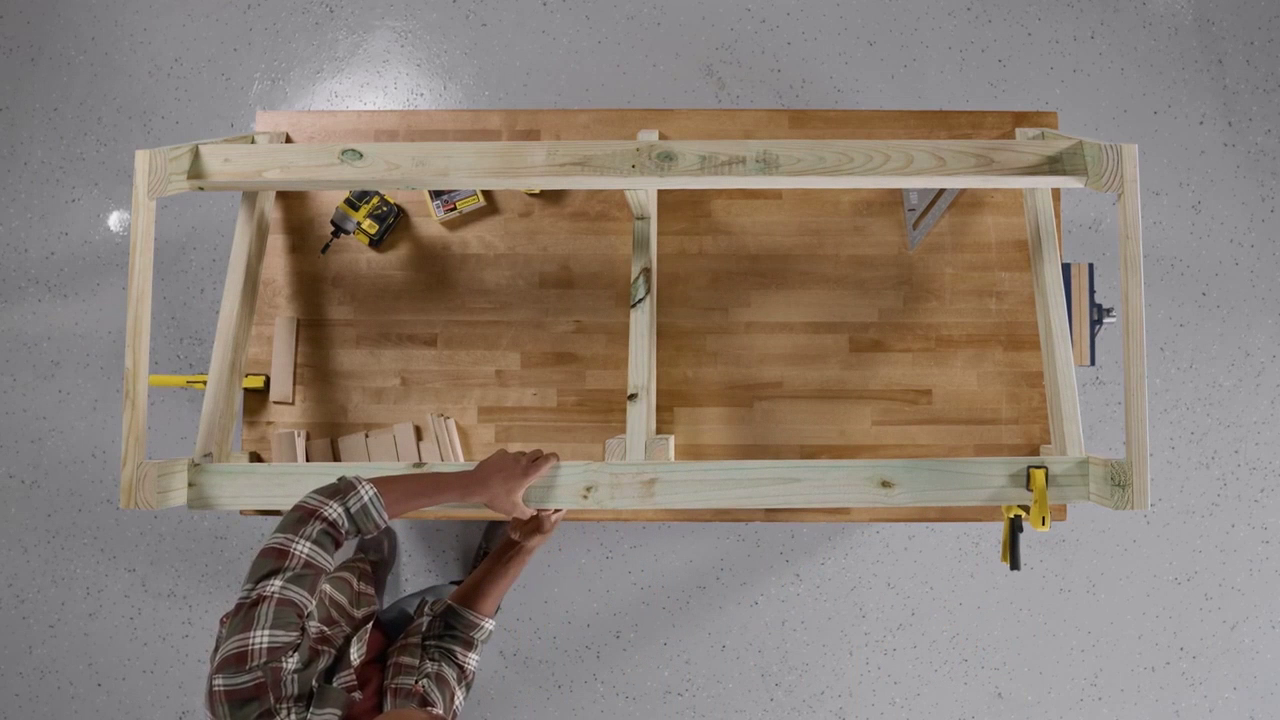

Multi-Reference (MultiRef) 4,017 triplets BOTTLENECK

Scene + Tool references ➔ Composite target, 10% GPU allocation

View Details ➔

Scene

Tool

➔

Composite

EDIT:

Scene

Tool

➔

Composite

EDIT:

Vendor batches P2-P6 | 95.4% are 2-reference | avg caption 2,770 words

Training Data by Task (Total: 269,688 rows)

T2I Home Depot (62,227 - 23.1%)

T2I Stock (162,480 - 60.3%)

IE (40,964 - 15.2%)

MultiRef (4,017 - 1.5%)

GPU Allocation vs Data Volume Mismatch

GPU Allocation (128 total)

Config: 20260330_lite_thd.yaml

Data Volume (269K rows)

MultiRef gets 10% GPUs but only has 1.5% of data - BOTTLENECK

Parquet Files Downloaded to foundry_parquets/

| File | Task | Rows | Size | Key Columns | S3 Bucket | Caption Format |

|---|---|---|---|---|---|---|

| t2i_3_18_2026.parquet | T2I | 62,227 | 46.2 MB | frame_hash_id, caption, s3_frame_path, width, height, is_home_depot | foundry-thd-enterprise-adobe-assets | SCAP v2 JSON |

| stock_3_18_2026.parquet | T2I | 162,480 | 120.7 MB | strImagehash, caption, s3_frame_path, width, height, query, is_home_depot | mldp-image | SCAP v2 JSON |

| ie_3_18_2026.parquet | IE | 40,964 | 34.8 MB | frame_hash_id, caption, target_image, reference_images, edit_instruction | foundry-thd-enterprise-adobe-assets | SCAP + Edit Instruction |

| multiref_3_18_2026.parquet | MultiRef | 4,017 | 19.0 MB | frame_hash_id, caption, edit_instruction, reference_images, target_image, num_reference, source | foundry-thd-enterprise-adobe-assets | Rich Caption + Edit Instruction |

| multiref_subset_02262026.parquet | MultiRef-v0 | 2,543 | 3.7 MB | unique_id, reference_images, target_image, source, edit_instruction | foundry-thd-enterprise-adobe-assets | After Caption + Edit Instruction |

THD vs Non-THD Split (T2I only)

T2I Home Depot Parquet (62,227)

65.1% Home Depot, 34.9% non-HD within this parquet

Stock Parquet (162,480)

100% non-HD (professional stock photos matched by query)

Training config note: The YAML uses 80:20 THD:non-THD ratio at training time via sampling weights.

Effective THD data for T2I = 40,530 (from HD parquet) used at 80% weight.

Stock 162K provides the non-THD portion + keyword-matched THD-adjacent content.